🇨🇳 DeepSeek just open-sourced a 1.6T AI model built for coding agents and million-token memory

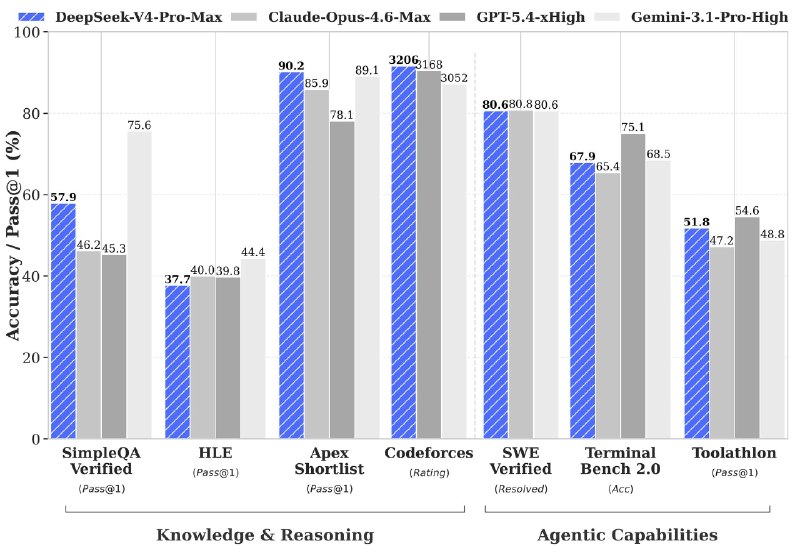

DeepSeek unveiled its V4 generation with two new foundation models: DeepSeek-V4-Pro (1.6 trillion parameters, activating only 49B per token) and DeepSeek-V4-Flash (284B parameters focused on efficiency). The release pushes open-source AI closer to the frontier dominated by closed labs.

What makes V4 different:

• Introduces DeepSeek Sparse Attention (DSA), a new architecture designed to cut memory and compute costs.

• Uses token-wise compression, allowing far longer context windows without the usua

DeepSeek unveiled its V4 generation with two new foundation models: DeepSeek-V4-Pro (1.6 trillion parameters, activating only 49B per token) and DeepSeek-V4-Flash (284B parameters focused on efficiency). The release pushes open-source AI closer to the frontier dominated by closed labs.

What makes V4 different:

• Introduces DeepSeek Sparse Attention (DSA), a new architecture designed to cut memory and compute costs.

• Uses token-wise compression, allowing far longer context windows without the usua