⚙️ The real story behind Sber’s GigaChat-3.1 release

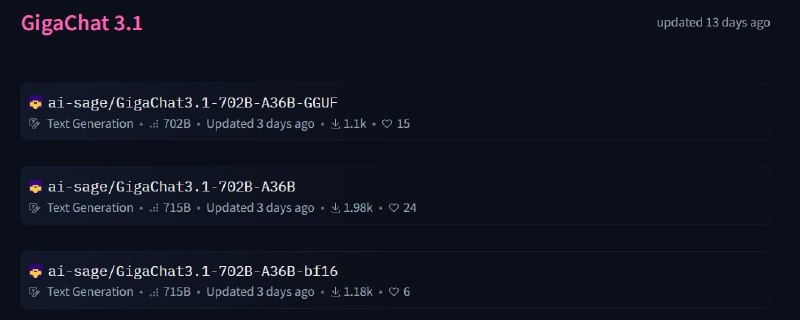

Sber released Ultra (702B) and Lightning (1.8B active) models. Everything is open source under the MIT License.

Performance is strong:

• Ultra > DeepSeek-V3, Qwen3 in reasoning and math

• Lightning ≈ on par with top-of-the-line models, but much smaller

But what stands out is the engineering work behind it.

The team had to solve a core limitation — generation loops, which are a major quality issue in large systems.

Instead of tuning around it, they:

• Built a custom metric to detect loops

• Reworked the post-training pipeline

• Moved D

Sber released Ultra (702B) and Lightning (1.8B active) models. Everything is open source under the MIT License.

Performance is strong:

• Ultra > DeepSeek-V3, Qwen3 in reasoning and math

• Lightning ≈ on par with top-of-the-line models, but much smaller

But what stands out is the engineering work behind it.

The team had to solve a core limitation — generation loops, which are a major quality issue in large systems.

Instead of tuning around it, they:

• Built a custom metric to detect loops

• Reworked the post-training pipeline

• Moved D