🔥 Kimi AI introduces Attention Residuals, a new way to rethink how neural networks use past layers

Researchers at Moonshot AI just proposed a new architecture tweak that could make large AI models more efficient and smarter about how they use information from earlier layers. Instead of the traditional residual connections used in deep networks, they introduce Attention Residuals, a system where each layer can selectively attend to representations from previous layers.

Here’s what’s new:

Attention over past layers:

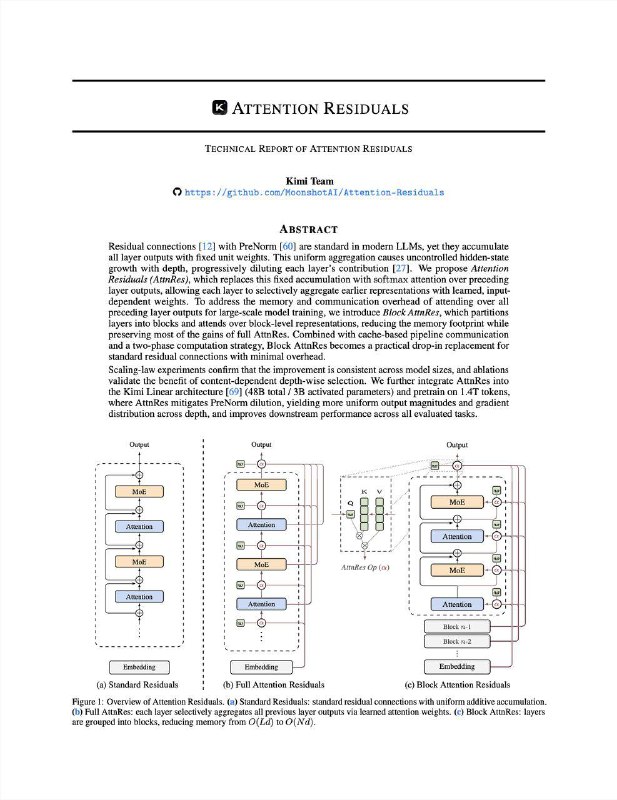

• Traditional residuals simply add outputs from earlier layers in a fixed way

Researchers at Moonshot AI just proposed a new architecture tweak that could make large AI models more efficient and smarter about how they use information from earlier layers. Instead of the traditional residual connections used in deep networks, they introduce Attention Residuals, a system where each layer can selectively attend to representations from previous layers.

Here’s what’s new:

Attention over past layers:

• Traditional residuals simply add outputs from earlier layers in a fixed way